Artificial intelligence is rapidly transforming how businesses interact with customers. From AI chatbots to virtual assistants and automated help desks, companies are increasingly relying on AI-powered systems to deliver faster and more efficient customer support.

However, one major challenge that organizations face when deploying AI systems is AI hallucination. AI hallucinations occur when a model generates responses that sound correct but are actually inaccurate or completely fabricated.

For customer-facing systems, this problem can lead to misinformation, poor customer experiences, and even reputational damage. This is why many organizations are implementing AI guardrails to reduce hallucinations and ensure more reliable AI responses.

In this article, we will explore how AI guardrails work, why hallucinations occur in AI systems, and how enterprises can reduce these risks in customer-facing AI applications.

Understanding AI Hallucinations

AI hallucination is a well-known issue in large language models and generative AI systems. These models generate responses based on patterns learned during training rather than verified real-time knowledge.

Because of this, AI systems may sometimes produce answers that appear logical but are factually incorrect.

For example, a customer might ask a chatbot about a product warranty policy. If the AI system does not have accurate information available, it may still generate an answer instead of admitting uncertainty.

This fabricated response is known as an AI hallucination.

In customer-facing environments, hallucinations can create several problems.

They may provide incorrect product details, misinform customers about company policies, or give inaccurate technical guidance.

Over time, repeated errors can reduce trust in the company’s AI systems.

Why Hallucinations Are a Serious Problem in Customer Support

Customer-facing AI systems are often used to answer thousands of queries every day. These systems may handle questions related to products, services, pricing, troubleshooting, or company policies.

When hallucinations occur in such environments, the consequences can be significant.

Misinformation to Customers

Incorrect responses can confuse customers and lead to misunderstandings about products or services.

Loss of Customer Trust

Customers expect reliable answers. If an AI system frequently gives wrong information, users may lose confidence in the company.

Increased Support Workload

When customers receive incorrect responses, they often escalate the issue to human support agents, which increases operational workload.

Brand Reputation Risks

Public-facing AI systems represent the brand. Incorrect responses can damage the company’s reputation.

Because of these risks, businesses must take proactive steps to reduce hallucinations before deploying AI assistants.

What Are AI Guardrails?

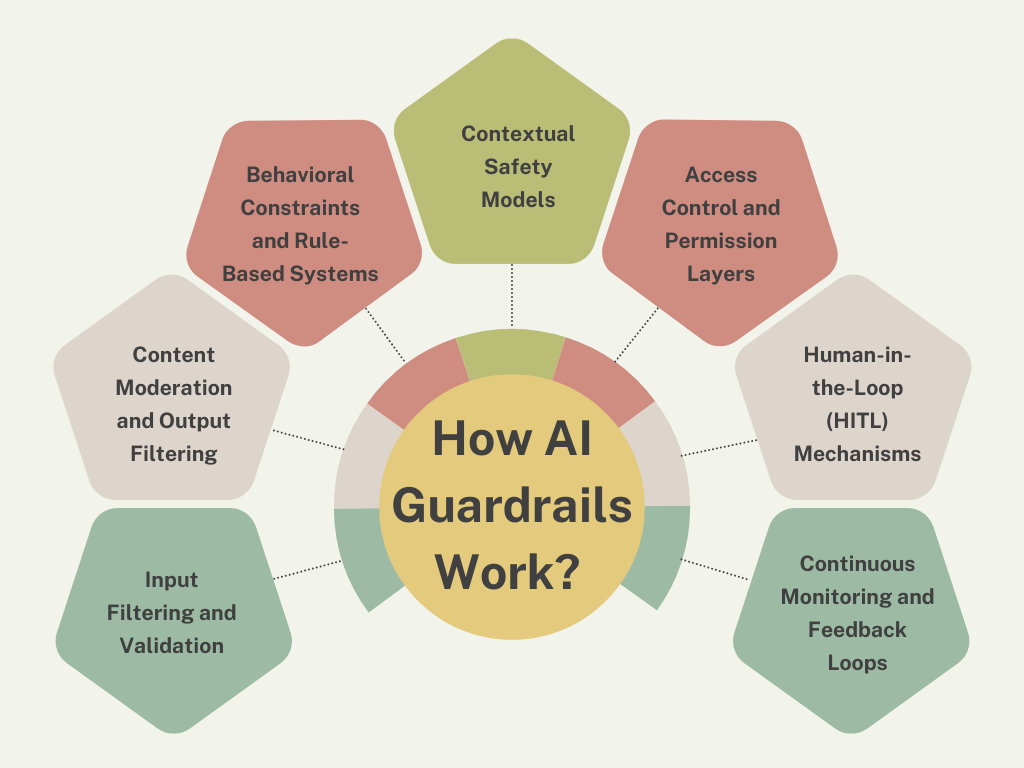

AI guardrails are safety mechanisms designed to monitor and control how AI systems generate responses.

Instead of allowing AI models to produce unrestricted outputs, guardrails act as control layers that validate inputs, retrieve verified information, and filter responses.

These safeguards ensure that AI-generated answers remain accurate, safe, and aligned with company policies.

Guardrails typically operate across multiple stages of the AI response pipeline, including input validation, knowledge retrieval, response generation, and output monitoring.

How AI Guardrails Reduce Hallucinations

AI guardrails help minimize hallucinations by ensuring that AI systems rely on verified information rather than purely generative responses.

Several mechanisms contribute to this process.

Retrieval-Augmented Generation (RAG)

One of the most effective ways to reduce hallucinations is through retrieval-augmented generation (RAG).

In this architecture, the AI system retrieves relevant information from trusted knowledge sources before generating a response.

These sources may include:

- Internal documentation

- Product knowledge bases

- Customer support articles

- Enterprise databases

By grounding responses in real data, RAG significantly reduces the likelihood of fabricated answers.

Knowledge Base Integration

Customer-facing AI systems often connect to structured knowledge bases.

These knowledge repositories contain verified company information such as policies, product specifications, and troubleshooting guides.

Guardrails ensure that AI responses rely on these verified sources instead of generating unsupported statements.

Input Validation

Guardrails also analyze user queries before they reach the AI model.

If a query requests sensitive data or information outside the system’s knowledge scope, the AI assistant can redirect the user or provide a safe fallback response.

This prevents the model from attempting to generate uncertain answers.

Output Verification

Before a response is delivered to the customer, guardrails can evaluate the generated output.

Content moderation models or validation systems check for inaccuracies, unsafe language, or policy violations.

If a response fails validation, the system can block it or provide a safer alternative.

Confidence Scoring

Some AI systems assign confidence scores to generated responses.

If the AI system is uncertain about an answer, guardrails may prompt the system to provide a disclaimer or escalate the request to a human agent.

This prevents the system from presenting low-confidence information as factual.

Technologies That Support AI Guardrails

Modern AI guardrail systems rely on several technologies that work together to reduce hallucinations.

Vector Search

Vector search enables AI systems to retrieve semantically relevant information from large knowledge repositories.

Instead of relying only on keywords, vector search identifies documents with similar meaning.

This improves the accuracy of retrieved information.

Enterprise Knowledge Indexing

Organizations often store thousands of documents across internal systems.

Knowledge indexing organizes these documents so that AI assistants can retrieve relevant information quickly.

Proper indexing improves retrieval accuracy and reduces hallucination risk.

Content Moderation Models

Content moderation models analyze generated responses to detect harmful or inappropriate outputs.

These models ensure that responses follow company policies and maintain professional communication standards.

AI Governance Frameworks

Enterprises often implement governance frameworks that define rules for how AI systems can interact with users and access company data.

Guardrails enforce these policies automatically.

Benefits of AI Guardrails in Customer-Facing Systems

Implementing guardrails offers several advantages for organizations that deploy AI-powered customer support systems.

Higher Response Accuracy

AI systems that rely on verified knowledge sources are more likely to deliver accurate responses.

Improved Customer Experience

Customers receive reliable information quickly, which improves satisfaction and trust.

Reduced Operational Risk

Guardrails minimize the chance of misinformation or compliance violations.

Scalable Support Systems

With fewer errors and hallucinations, AI assistants can handle large volumes of customer queries efficiently.

Stronger Brand Trust

Reliable AI systems reflect positively on the company’s commitment to quality customer service.

Best Practices for Reducing AI Hallucinations

Organizations deploying AI assistants should follow several best practices to minimize hallucination risks.

Use Reliable Knowledge Sources

AI systems should rely on verified enterprise data instead of purely generative responses.

Combine Retrieval and Generation

Retrieval-augmented architectures significantly reduce hallucinations.

Monitor AI Behavior

Continuous monitoring helps identify errors and improve system reliability.

Implement Multi-Layer Guardrails

Input validation, knowledge retrieval, and output filtering should work together.

Maintain Updated Knowledge Bases

Outdated data can lead to incorrect responses, so knowledge repositories must be regularly updated.

Real-World Use Cases

Many industries now rely on AI guardrails to improve customer-facing AI systems.

E-Commerce Platforms

AI assistants help customers find products, check order status, and understand return policies.

Guardrails ensure that the information provided is accurate.

Banking and Financial Services

AI assistants help customers with account information and financial guidance while maintaining compliance with regulations.

Technology Companies

Technical support chatbots assist users with troubleshooting and documentation.

Guardrails ensure responses are based on verified support content.

Industry Reviews and Expert Insights

Technology leaders and AI governance experts consistently emphasize the importance of guardrails in AI systems.

Many enterprise technology teams report that guardrails significantly reduce hallucination rates in customer-facing AI assistants.

Customer support managers also highlight improvements in response reliability after implementing retrieval-based architectures and output validation systems.

Experts in responsible AI governance believe that guardrails will become a standard requirement for enterprise AI deployments in the coming years.

The Future of Reliable Customer-Facing AI

As AI systems continue to evolve, organizations will increasingly focus on building trustworthy AI systems rather than simply powerful ones.

Future customer-facing AI assistants will combine advanced reasoning capabilities with strong safety frameworks.

AI guardrails will remain a critical component of this architecture, ensuring that AI-generated responses are accurate, transparent, and aligned with business policies.

Businesses that prioritize responsible AI deployment will be better positioned to scale AI technologies while maintaining customer trust.

Frequently Asked Questions

What is an AI hallucination?

An AI hallucination occurs when an AI system generates information that appears accurate but is actually incorrect or fabricated.

Why are hallucinations dangerous in customer-facing AI systems?

Hallucinations can provide incorrect information to customers, damage brand reputation, and increase the workload for human support teams.

How do AI guardrails reduce hallucinations?

Guardrails enforce mechanisms such as knowledge retrieval, response validation, and input filtering to ensure AI responses rely on verified information.

What is retrieval-augmented generation?

Retrieval-augmented generation is a method where AI systems retrieve relevant information from knowledge bases before generating responses.

Are AI guardrails necessary for all customer-facing AI systems?

Yes. Guardrails are essential for ensuring accuracy, protecting sensitive information, and maintaining reliable AI interactions with customers.